BLOG. 3 min read

ETFS: A Case For “Look-Through” Data In Risk Management And Beyond

May 21, 2021 by Patrick Braun

For more than 25 years, exchange-traded funds (ETFs) have transformed the global investment landscape. Born out of US equity market efficiency assumptions and computer advancements, these low-cost investment vehicles are found today in many institutional portfolios. Investors use ETF products well beyond original US equity bounds. These liquid beta instruments seek to replicate performances of international equity indices, commodity indicators and selected fixed-income universes. Considering the continuous growth in assets allocated to ETFs—ESG-tilted ones in particular—this blog looks at some analytics consequences on institutional investors’ asset allocation and risk management processes.

Data convenience

For wealth managers, hedge funds and fund-of-funds specialists invested in ETFs, sourcing detailed daily data represents a significant operational burden. This often leads these investment professionals to limit data scopes to ETF top figures. Aside from values, these figures may include aggregated exposures such as sector/country/rating weights, beta, duration and historical volatility. This “top-down” data trade-off has consequences on investment modeling options and depth of analysis. ESG attribute enrichment and provision of fund-level statistics can be added to feed efficient descriptive analytics and risk measurement frameworks. From an investment viewpoint, the core benefits of this simplified modeling approach reside in its consistency; index-based allocation decisions and ETF strategy implementation are well aligned. Limitations quickly appear though. Depending on investors’ regulatory environments, the absence of “look-through” info might result in more onerous capital reserves. For example, insurance firms operating under the European Solvency II regulation can benefit from enhanced data transparency. In absence of granular position details, their G7-market equity ETF positions may be exposed to Standard Formula “Type 2” equity shocks. More in-depth data could instead qualify these investments for less onerous “Type 1” stresses.

A middle ground

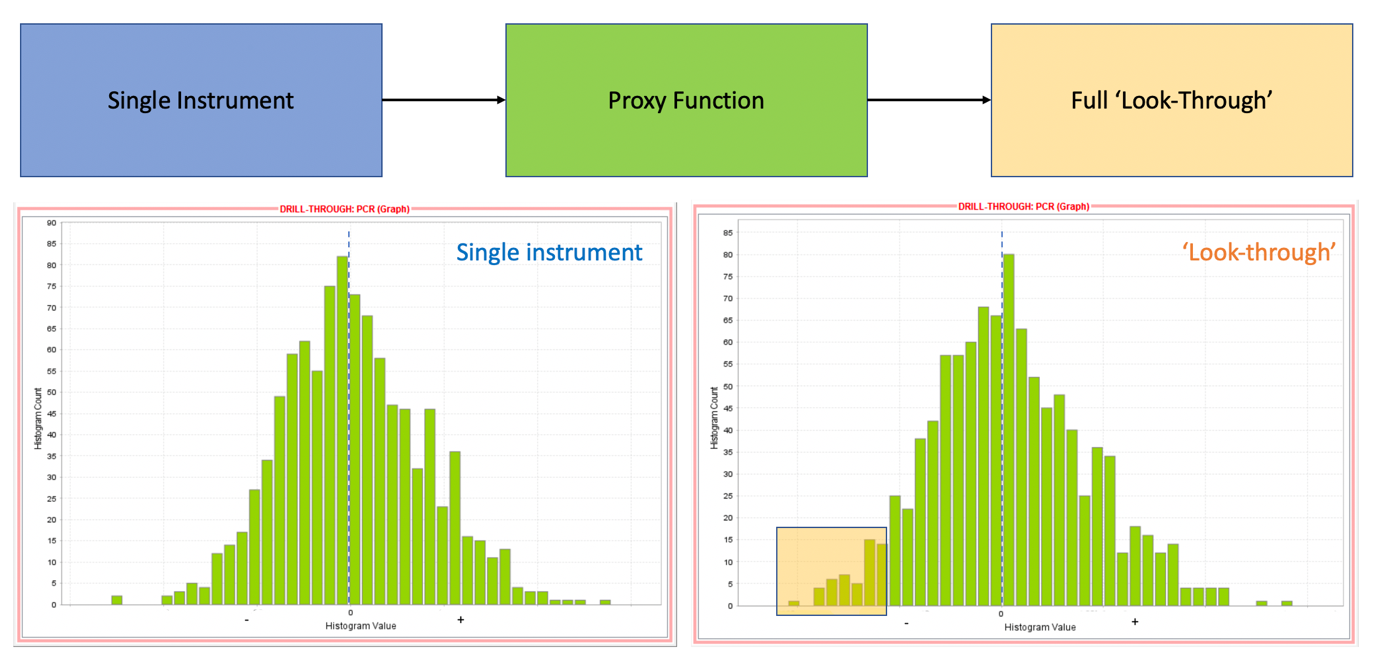

During the pre-2016 Solvency II preparation period, European insurance firms reviewed their data feeds and operational governance around these. They listed suitable options for risk-related asset modeling too. Driven by actuarial considerations, a lot of companies decided to use curve fitting techniques to bring together proxied assets and liabilities from across their organizations. In that context, proxied instruments represent a good middle ground between data sourcing aspects, economic sensitivity relevance and computational impact on large risk simulations. Defined as polynomials of various degrees, these proxy instruments mirror financial positions and external funds’ exposures to market risk factors. These factors can include equity, fixed income, credit, commodity, inflation and more. A benefit of using flexible curve-fitting techniques on ETFs is the ability for users to perform aggregated sensitivities and advanced scenario analysis on the resulting proxies. For example, users can check a fixed income ETF sensitivity to spread widening, government bond or inflation curve shifts. Unlike the previous single-instrument approach, these passive fund proxies are compatible with complex Value-at-Risk attribution schemes. Risk managers can attribute ETF-proxy simulation outputs to equity, government bond, corporate, inflation, forex, commodity and volatility factor blocks. The predictive insights come at a price though; curve fitting processes require careful calibration. Proxies can otherwise show quick limitations when factor simulations fall far out of calibration data boundaries.

The real picture

From a risk management perspective, the real picture of a fund blending long and short equities, futures, fixed income positions and ETFs across asset classes can only be gained using a “look-through” approach. It means modeling individual ETF positions to the most granular level in a consistent analytics framework. This requires pricing libraries, simulation and fund-of-funds aggregation capabilities. For most investment organizations, the functional benefits of this approach should far out-weight marginal operational data work. It first allows front and middle-office stakeholders to confirm net, gross single-name exposures across their overall fund.

As shown in this graphic, ETF “look-through” data coupled with advanced simulations can help investment and risk management teams investigate return distribution tail risks and diversification effects. Leveraging these insights, the teams can implement well-targeted derivative overlays as necessary. Asset allocators can also use “look-through” to assess equity style tilts across their ETF investments and better manage unintended drifts on popular size, value, growth and momentum factors.

Financial news from around the world reminds risk management and investment professionals that aggregated exposure information might be sufficient on most business days. When it comes to risk management though, granular information matters every single day. In the current cost-conscious business environment, is it time for more financial institutions to assess how data granularity decisions could impact their investment and risk management processes?

To learn more about SS&C Algorithmics’ financial risk management solutions, please visit the "SS&C Algorithmics Managed Data Analytics Service" page.

Written by Patrick Braun

Director, Buy-Side Product Management